|

It organizes partitions such that the performance of subsequent reads improve.Both are correct but in different levels. Optimized writes improve the overall efficiency of the writes and subsequent reads. This is an approximate size and can vary depending on dataset characteristics. When Optimize write is enabled, sink transformation dynamically optimizes partition sizes based on the actual data by attempting to write out 128 MB files for each table partition. Once a compaction operation is performed, it creates a new version of the table, and writes a new file containing the data of several previous files in a compact compressed form. Auto compaction only kicks in when there are at least 50 files. Auto compaction helps in coalescing a large number of small files into a smaller number of large files. When Auto compact is enabled, after an individual write, transformation checks if files can further be compacted, and runs a quick OPTIMIZE job (with 128 MB file sizes instead of 1GB) to further compact files for partitions that have the most number of small files. This option is supported across all update methods. any columns that are present in the current incoming stream but not in the target Delta table is automatically added to its schema. When Merge schema option is enabled, it allows schema evolution, i.e. In Settings tab, you find three more options to optimize delta sink transformation. It need not be present in the source data.

part_col is a column that the target delta data is partitioned by. DerivedColumn1 sink(ĭelta will only read 2 partitions where part_col = 5 and 8 from the target delta store instead of all partitions. You can specify fixed set of values that a partition column may take.ĭelta sink script example with partition pruningĪ sample script is given as below. Only partitions satisfying this condition is fetched from the target store. update/upsert/delete), you can limit the number of partitions that are inspected. With this option under Update method above (i.e. The associated data flow script is: moviesAltered sink( As a result, you may notice fewer partitions and files that are of a larger sizeĪfter any write operation has completed, Spark will automatically execute the OPTIMIZE command to re-organize the data, resulting in more partitions if necessary, for better reading performance in the future When all Update methods are selected a Merge is performed, where rows are inserted/deleted/upserted/updated as per the Row Policies set using a preceding Alter Row transform.Īchieve higher throughput for write operation via optimizing internal shuffle in Spark executors. If your data contains rows of other Row policies, they need to be excluded using a preceding Filter transform. When you select "Allow insert" alone or when you write to a new delta table, the target receives all incoming rows regardless of the Row policies set. You can leave it as-is and append new rows, overwrite the existing table definition and data with new metadata and data, or keep the existing table structure but first truncate all rows, then insert the new rows. Tells ADF what to do with the target Delta table in your sink. When a value of 0 or less is specified, the vacuum operation isn't performed. Nameĭeletes files older than the specified duration that is no longer relevant to the current table version. You can edit these properties in the Settings tab. The below table lists the properties supported by a delta sink. Delta source script example source(output(movieId as integer, To import the schema, a data flow debug session must be active, and you must have an existing CDM entity definition file to point to.

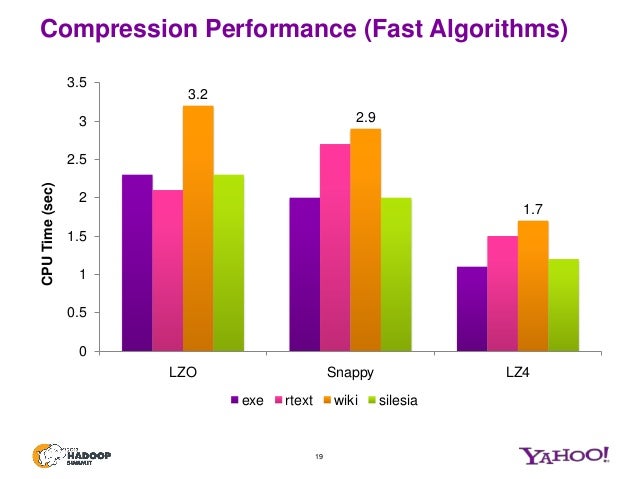

This allows you to reference the column names and data types specified by the corpus. To get column metadata, click the Import schema button in the Projection tab. If true, an error isn't thrown if no files are foundĭelta is only available as an inline dataset and, by default, doesn't have an associated schema. The container/file system of the delta lakeĬhoose whether the compression completes as quickly as possible or if the resulting file should be optimally compressed.Ĭhoose whether to query an older snapshot of a delta table You can edit these properties in the Source options tab. The below table lists the properties supported by a delta source. This connector is available as an inline dataset in mapping data flows as both a source and a sink.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed